AI Transcription in 2026: How Local Models Beat Cloud Services

AI transcription accuracy has shifted. Local models like Whisper and Parakeet now match or beat cloud services — with better privacy and no subscription fees.

"Local AI can't match cloud accuracy." That was a reasonable position in 2022. Whisper had just been released, local hardware was barely fast enough to run the large models, and cloud services had years of infrastructure advantage behind them.

It's not the right position anymore.

AI transcription is the process of converting spoken audio into text using machine learning models — specifically, transformer-based neural networks trained on millions of hours of speech data. Today, the same models that cloud services run on their servers can run locally on a MacBook. The accuracy gap has closed. What remains are differences in privacy, offline capability, and cost — and on those dimensions, local has won.

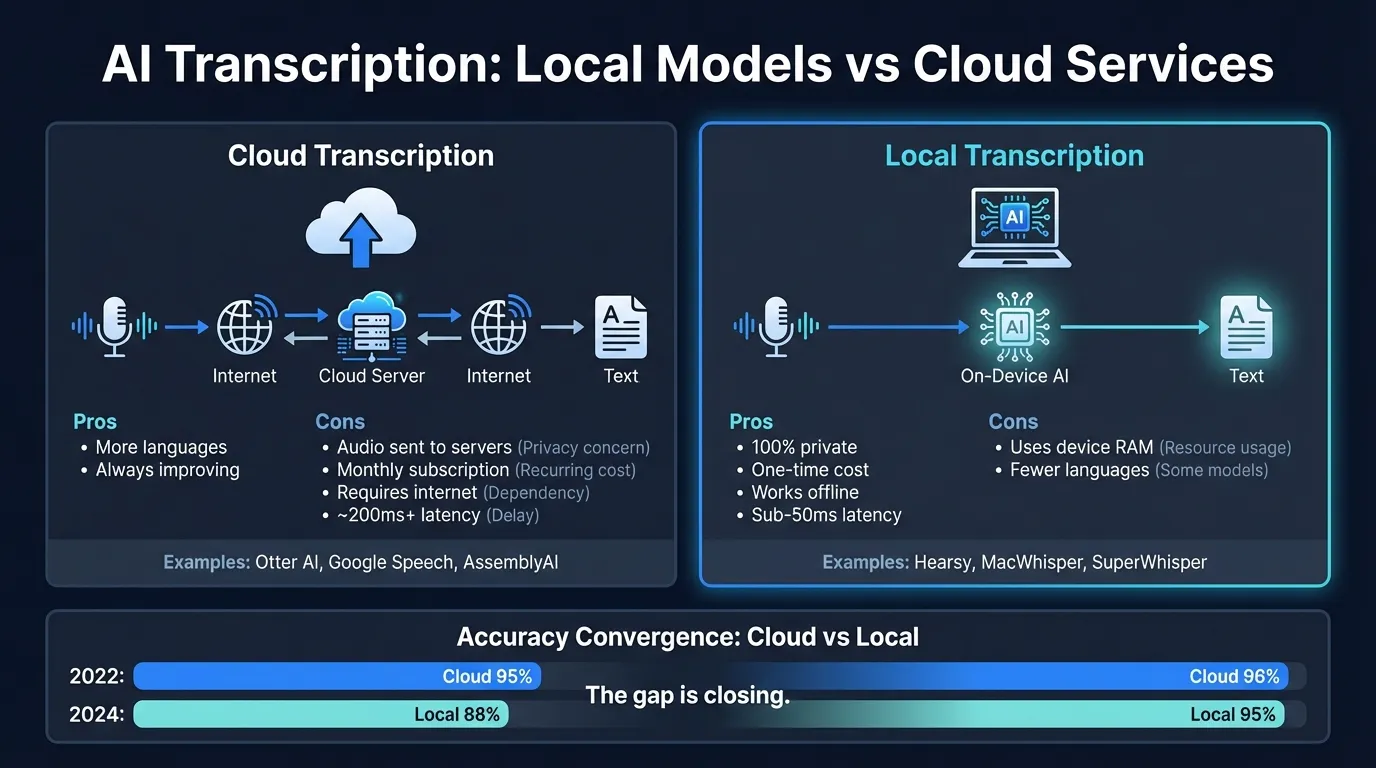

Here's how local and cloud AI transcription compare across the key dimensions:

What changed#

The shift happened in three stages.

OpenAI Whisper (September 2022) was the first widely-available open-source model that matched commercial cloud services on accuracy. Trained on 680,000 hours of multilingual audio, it introduced zero-shot transcription that required no voice training and handled 99 languages. It was MIT-licensed, meaning any developer could build on it. Cloud providers still had better hardware for running it — local machines were slow — but the underlying model was identical.

Apple Silicon closed the hardware gap. M1, M2, M3, and M4 chips include dedicated Neural Engine cores optimized for the matrix multiplication at the heart of transformer inference. Whisper Large V3 runs at real-time speed or faster on an M2 MacBook Pro. The constraint that made cloud processing necessary — local hardware couldn't run large models quickly enough — disappeared.

NVIDIA Parakeet changed what "local" meant for speed. NVIDIA released Parakeet TDT in 2024 under Apache 2.0 license. Unlike Whisper's chunk-based processing, Parakeet uses token-and-duration prediction that enables streaming output — text appears while you're still speaking. Parakeet TDT 0.6B v2 achieves 1.69% word error rate on the LibriSpeech clean benchmark (NVIDIA, 2025) and processes audio at over 3,000 times real-time speed. On Apple Silicon, streaming latency is under 50ms.

The result: local AI transcription that beats most cloud services on accuracy and responds faster than any cloud can, because there's no network round-trip.

Local vs cloud: how they work#

Local AI transcription loads the speech recognition model into your device's RAM and runs it on the GPU or Neural Engine. When you speak, audio is captured by your microphone, processed entirely on-device, and output as text. The audio never leaves your computer. No internet connection required.

Cloud AI transcription captures audio, compresses it, and sends it to a server. The server runs the model and returns the transcription. You see the result after a network round-trip — typically 200-500ms under good conditions, longer on slow connections or overloaded servers.

| Factor | Local | Cloud |

|---|---|---|

| Audio storage | On-device only | Sent to external servers |

| Internet required | No | Yes |

| Latency | Under 50ms (Parakeet) | 200-500ms + network |

| Accuracy (English, clear audio) | 1.69% WER (Parakeet TDT v2) | Varies by service |

| Language support | English (Parakeet) or 99 languages (Whisper) | Varies by service |

| Cost model | One-time purchase | Per-minute or subscription |

| Offline capability | Yes | No |

| Real-time streaming | Yes | Some services |

The accuracy comparison#

The narrative that cloud services are more accurate than local models was true in 2021. It has been wrong since at least 2023.

Whisper Large V3 achieves approximately 2.7% word error rate on the LibriSpeech test-clean benchmark — the standard academic dataset for English speech recognition accuracy. This model runs on your local Mac, not a cloud server.

NVIDIA Parakeet TDT 0.6B v2 achieves 1.69% WER on the same benchmark (NVIDIA, 2025), outperforming Whisper Large V3 on both accuracy and speed. On release, it topped the Hugging Face Open ASR Leaderboard.

Cloud services that use OpenAI's speech API are running Whisper-equivalent or Whisper-based models. You get the same model accuracy, plus network latency, plus per-minute charges. The original argument for cloud was never that cloud models were fundamentally superior — it was that local hardware couldn't run the large models. That argument is gone.

OpenAI released GPT-4o-transcribe in March 2025 — a model with lower error rates than Whisper on difficult audio conditions. This is a real cloud advantage, but a narrow one. GPT-4o-transcribe runs models too large for consumer hardware, and it shows its edge specifically on accented speech, high background noise, and specialized vocabulary. For standard dictation of clear English speech, Parakeet matches or beats it.

Where cloud still has an accuracy edge:

- Heavy background noise (busy cafes, outdoor recording)

- Strong accented speech, especially non-native English

- Highly specialized vocabulary: medical, legal, financial terminology

- Very long recordings where broader context helps

Where local models match or beat cloud:

- Clear speech in a quiet environment

- Standard dictation and note-taking

- Real-time streaming for live typing

- English-only use cases (Parakeet)

Continue reading

AI Transcription That Stays on Your Mac

Run Whisper and Parakeet locally with a native Mac app. No Python setup, no command line.

Privacy: the structural problem with cloud#

Cloud transcription has a structural privacy problem that better accuracy doesn't fix.

When you use a cloud transcription service, your audio goes to a server. That means: transmission over a network (which can be intercepted), storage on third-party infrastructure (subject to that company's data policies), and potential use for model improvement depending on the service's terms of service.

This matters differently depending on what you're dictating. Transcribing a podcast episode — minimal concern. Medical consultation notes, legal strategy, financial discussions, anything confidential — the implications are real.

Local AI transcription removes this issue structurally. Audio is captured, processed on-device, and output as text. It never exists on any server. You can verify this with a network monitor like Little Snitch: a properly built local dictation app makes no outbound connections during transcription.

For users in regulated industries — healthcare under HIPAA, legal work, finance under GDPR or SOC 2 — local processing doesn't just feel safer. It simplifies compliance. Data that never leaves your device doesn't trigger most regulatory requirements around transmission and storage.

Cost: subscription vs one-time#

Cloud AI transcription services have a structural cost problem too: servers cost money to run, so they charge you accordingly — per minute of audio or per month.

Consumer cloud apps like Otter.ai and Wispr Flow use monthly subscription pricing. API-based services charge per minute of audio processed. For occasional use, these amounts are modest. For daily dictation — 30 to 60 minutes per workday — subscription costs compound into hundreds of dollars per year.

Local AI transcription apps can charge a one-time fee because there's no per-use server cost. Once you own the app, transcribing 1,000 hours costs the same as transcribing 1 hour: nothing extra.

For light users, the difference is marginal. For people who dictate regularly as part of their workflow, the lifetime cost comparison between a one-time purchase and a subscription isn't close.

When cloud AI transcription still makes sense#

Despite the local advantage on accuracy, privacy, and cost, cloud transcription is the better choice in specific scenarios.

You need the best possible accuracy on difficult audio. GPT-4o-transcribe handles heavy accents, background noise, and specialized vocabulary better than current local models. If you're transcribing interviews recorded in noisy environments, or medical dictation with complex terminology, cloud with GPT-4o-transcribe is worth the trade-off.

You need batch processing at scale. Cloud services handle large queues — hundreds of hours of recordings — without tying up your machine. If you're processing meeting archives or podcast back-catalogs, AssemblyAI and Deepgram's APIs are built for this.

You need speaker diarization. Identifying who said what in multi-speaker recordings requires models that most local apps don't ship. Cloud services handle this well.

You're on older or non-Apple-Silicon hardware. On Intel Macs or non-Apple computers, running Whisper Large locally is significantly slower. Cloud processing is faster when local hardware struggles to run the models.

Convenience on a shared or temporary machine. No installation needed for web-based cloud transcription tools.

Real-time dictation: where local wins outright#

For system-wide real-time dictation — pressing a hotkey and typing by voice into any app — local models have the practical argument locked up.

Cloud transcription for real-time use requires sending audio chunks to a server and waiting for text back. Even under ideal conditions, this adds 200-500ms of latency per chunk. That's noticeable when you're trying to type fluidly. Local models with Parakeet stream text in under 50ms — faster than a cloud service can even receive your audio packet.

Apps like Hearsy and SuperWhisper run local Parakeet and Whisper models for exactly this reason. The result is dictation that feels instantaneous, works on a plane or in a building with no Wi-Fi, and never sends your voice to a server.

For meeting transcription and audio file processing — where you're not trying to type in real time — cloud services remain convenient and competitive.

AI transcription options compared#

| App / Service | Type | Processing | Real-time dictation | Languages | Pricing |

|---|---|---|---|---|---|

| Hearsy | App (Mac) | Local | Yes | 99 (Whisper) / EN (Parakeet) | One-time |

| SuperWhisper | App (Mac) | Local | Yes | 99 languages | One-time |

| MacWhisper | App (Mac) | Local | File-based | 99 languages | One-time |

| Otter.ai | Cloud service | Cloud | Meetings only | English primary | Subscription |

| AssemblyAI | Cloud API | Cloud | Yes (streaming API) | 99+ languages | Per-minute |

| OpenAI Whisper API | Cloud API | Cloud | Yes (API) | 99 languages | Per-minute |

| OpenAI GPT-4o-transcribe | Cloud API | Cloud | Yes (API) | 57 languages | Per-minute |

| macOS Built-in | OS feature | Local | Yes | 50+ languages | Free |

Which to use#

For real-time system-wide dictation on Mac: Local app with Parakeet engine for English, Whisper mode for multilingual. Both Hearsy and SuperWhisper handle this well.

For meeting transcription and audio files: MacWhisper locally, or cloud services if privacy isn't a concern and you need speaker identification.

For difficult audio — heavy accents, background noise, medical jargon: Cloud with GPT-4o-transcribe has the accuracy edge on genuinely challenging recordings.

For privacy-sensitive content: Local, always. Medical notes, legal dictation, financial discussions — keep audio on-device.

For regulated industries (healthcare, legal, finance): Local processing simplifies compliance. Data that stays on-device doesn't trigger transmission and storage regulations.

The core shift: cloud AI transcription was better than local because local hardware couldn't run the models. That hardware constraint is gone. For the majority of use cases — clear speech, real-time dictation, standard English — local models match or beat cloud on accuracy, respond faster, cost less over time, and keep your audio on your device.

The cases where cloud wins are real and specific. If your use case is there, cloud is the right answer. For everything else, local has won the practical argument.

For a comparison of Mac dictation apps that run local models, see the best dictation software for Mac guide. For privacy implications of voice data in cloud services, see the voice data privacy guide. For setup steps on any of these local apps, see the voice recognition setup guide.

Frequently asked questions#

What is AI transcription?#

AI transcription is the conversion of spoken audio into text using machine learning models. Modern systems use transformer-based neural networks — primarily OpenAI Whisper or NVIDIA Parakeet — trained on millions of hours of speech data. These models run either locally on your device or on cloud servers, depending on the service you use.

Is local AI transcription as accurate as cloud?#

For standard English speech, yes. NVIDIA Parakeet TDT 0.6B v2 achieves 1.69% word error rate on the LibriSpeech clean benchmark (NVIDIA, 2025). Whisper Large V3 achieves approximately 2.7% WER on the same benchmark. Cloud services using OpenAI's GPT-4o-transcribe model have an accuracy advantage specifically on difficult audio: heavy accents, background noise, and specialized vocabulary. For clear speech, local and cloud are comparable.

What is the difference between local and cloud AI transcription?#

Local AI transcription runs the speech recognition model on your device — audio never leaves your computer. Cloud transcription sends audio to external servers for processing. For most use cases, accuracy is now comparable. The meaningful differences: privacy (local keeps audio on-device), offline capability (local works without internet), latency (local is faster for real-time streaming), and cost model (local apps use one-time pricing; cloud services charge per-minute or subscription).

Which AI transcription software is best for Mac in 2026?#

For real-time system-wide dictation: Hearsy uses Parakeet for English (under 50ms latency) and Whisper for multilingual support. SuperWhisper and VoiceInk are Whisper-only alternatives. For meeting and audio file transcription: MacWhisper handles file-based work locally. Cloud services (AssemblyAI, Otter.ai) work well when privacy isn't a concern and you need features like speaker diarization.

Does cloud AI transcription send my audio to external servers?#

Yes. Cloud AI transcription services — including Otter.ai, AssemblyAI, Deepgram, and OpenAI's Whisper API — process audio on their servers. Your voice data is transmitted over the network and stored on third-party infrastructure, subject to that service's data retention and privacy policies. Local apps process audio entirely on-device. Nothing is transmitted to any server.

Ready to Try Voice Dictation?

Hearsy is free to download. No signup, no credit card. Just install and start dictating.

Download Hearsy for MacmacOS 14+ · Apple Silicon · Free tier available

Related Articles

What Is OpenAI Whisper? A Complete Guide to Local AI Transcription

14 min read

Whisper vs Parakeet: Speed, Accuracy, and Language Support

10 min read

Whisper Large V3 vs V3 Turbo: Speed, Accuracy, Memory

10 min read

How to Run Whisper Locally on Mac (Without the Command Line)

8 min read

How to Convert Audio to Text on Mac: 5 Methods Compared

14 min read